Abstract

Objective: In New South Wales (NSW), influenza surveillance is informed by a number of discrete data sources, including laboratories, emergency departments, death registrations and community surveillance programs. The purpose of this study was to evaluate the NSW influenza surveillance system using the US Centers for Disease Control and Prevention guidelines for evaluating public health surveillance systems.

Importance of study: Having a strong influenza surveillance system is important for both seasonal and pandemic influenza preparedness. The findings will inform recommendations for strengthening surveillance in NSW.

Methods: The scope was limited to all sources included in the NSW Health Influenza Report in 2012–13. To assess the performance of the system, in-depth interviews (N = 21) were conducted with key stakeholders and thematically analysed. Respiratory testing data gathered through the sentinel laboratories in 2012 were used to estimate sensitivity, and laboratory notifications were analysed to assess timeliness and representativeness. Key documents – including reports, guidelines, correspondence and meeting minutes – were also reviewed, providing a method of triangulation.

Results: The NSW influenza surveillance system integrates multiple sources of surveillance of influenza and influenza-like illness to provide a comprehensive picture of influenza in the community. Despite its structural complexity, the system delivers quality, timely and relevant data to inform a range of public health activities, and the NSW Health Influenza Report is well regarded by stakeholders. Challenges include managing system complexity, key person risk and cross-jurisdictional issues. Stakeholders commented that system flexibility would depend on additional resourcing. Although the sensitivity of sentinel laboratory surveillance was estimated as 1–25%, depending on the time of year, understanding sensitivity remains a challenge in influenza surveillance where the true incidence of infection is unknown.

Conclusion: Influenza surveillance is critical for monitoring virological changes, understanding disease epidemiology and informing public health responses. The system was found to deliver timely and good-quality surveillance information. Additional value could be achieved by increasing flexibility and stability, automating systems (where possible) and formalising processes of data acquisition. The system continues to negotiate a number of constraints, including complexity and cross-jurisdictional issues, which are ongoing obstacles to realising some potential system improvements.

Full text

Introduction

Influenza is a highly contagious respiratory illness that is common in the winter months.1 Worldwide, around 20% of children and 5% of adults develop symptomatic influenza A or B each year.2 Although most influenza infections are self-limiting, few other diseases extract such a high socioeconomic toll from absenteeism, medical consultations, hospital admissions and economic loss.3 Influenza viruses are unique in their ability to cause both recurrent annual epidemics and serious pandemics.4 Surveillance is critical for monitoring virological changes and disease epidemiology, and informing public health responses.

According to the World Health Organization’s (WHO’s) Global Epidemiological Surveillance Standards (2013), the overarching goal of influenza surveillance is to minimise the impact of influenza by providing useful information to enable planning of appropriate control and intervention measures, and effectively allocate resources.5 In New South Wales (NSW), the NSW Health ‘Control guideline for public health units’ outlines the influenza surveillance objectives (Box 1).6

Box 1. NSW Health influenza surveillance objectives6

- Determine and monitor the stage, size and geographical spread of the influenza epidemic in the community

- Detect outbreaks in high-risk settings and implement appropriate control measures

- Better understand the epidemiology of the disease

- Determine the severity of the disease to inform appropriate disease control measures and health services planning

- Determine the influenza strains circulating in the community to inform vaccine development

- Determine resistance patterns of influenza circulating in the community to inform antiviral recommendations

- Facilitate further studies, where necessary, to investigate epidemiology, clinical features and vaccine effectiveness

Although confirmed influenza is a notifiable disease in NSW, it is assumed that only a small proportion of cases are laboratory tested.7 Surveillance for influenza thus uses a range of additional sources to monitor the onset, impact and duration of seasonal influenza, and forms the basis for pandemic surveillance. Over the past decade, data sources that have been used in surveillance include laboratory, general practice (GP) and emergency department data. Much of what is known as influenza surveillance is actually syndromic surveillance of influenza-like illness (ILI), which is considered a useful marker syndrome when multiple surveillance sources are triangulated and confounding factors are considered in interpretation of the data.8

The aim of the present study was to evaluate the performance of the NSW influenza surveillance system. The scope was limited to surveillance sources included in the NSW Health Influenza Report.

Influenza surveillance in NSW

At the time of this study, several surveillance sources existed in NSW, but not all were included in the Influenza Report. The report is the key system output compiled by the respiratory epidemiologist in the Communicable Diseases Branch (CDB) of NSW Health, using the sources described in Box 2. It is available weekly during the influenza season and monthly at other times of the year.9 The report is distributed to key stakeholders via email and the NSW Health website.

Box 2. The NSW influenza surveillance system

Surveillance sources included in the NSW Health Influenza Report

Emergency department surveillance (PHREDSS). PHREDSS extracts data from 59 hospitals in NSW10, based on provisional diagnoses of influenza-like illness (ILI) coded according to ICD-9, ICD-10 or the Systematized Nomenclature of Medicine.11 Data include information on age, sex, triage category and admission status. Data feeds are uploaded in near real time or in 4-hourly batches, and are monitored at least daily. PHREDSS produces a weekly report used by the Communicable Diseases Branch (CDB) of NSW Health for surveillance, with opportunities available for enhanced surveillance, if required.

Mortality data. All death registrations entered on the previous day at the NSW Registry of Births, Deaths and Marriages are loaded into the NSW Health PHREDSS database weekly. Pneumonia and influenza causes of death are selected, and a seasonal time-series model is fitted, to forecast the average background mortality rate for the season. This is then used to compare how the observed pneumonia and ILI mortality rate differs from the expected rate, based on the trend.12 Data are transferred weekly to the CDB via email.

Laboratories. In 2012, 11 (nine public and two private) sentinel sites, including 2 reference laboratories, were responsible for specialised strain characterisation and typing. This represented 79% of all influenza notifications in 2012. Laboratories report weekly on the number of positive influenza results and respiratory tests undertaken. Data are submitted to the CDB via email, fax or an online database.

Most laboratories follow a scenario-based algorithm developed by NSW Health to guide referral of isolates to the NSW reference laboratories. The algorithm sets out the priorities for specimen referral in the interseasonal period and at different influenza activity stages during the season. The aim of the algorithm is to reduce the burden of referral at the height of the influenza season, when the workload on laboratories is heavy and the circulating strains are already well characterised.13

World Health Organization Collaborating Centre (WHOCC). The WHOCC performs an integral function for NSW surveillance, despite being based in Victoria. Specimens are referred for monitoring of antigenic drift and antiviral resistance, identification of novel strains, and development of the annual influenza vaccine. The WHOCC performs testing on an ad hoc basis unless prioritisation is requested, with results returned to the CDB towards the end of the season. In 2012, results were available for around 80% of specimens from NSW.

Public health units. Surveillance staff manually entered all laboratory-confirmed influenza notifications into the Notifiable Conditions Information Management System. Although notifications are not routinely included in the Influenza Report, the NSW Health website is updated daily with the numbers of laboratory-confirmed infections. Public health units are also responsible for monitoring influenza outbreaks in residential care facilities, which are included in the Influenza Report.

Other surveillance sources in NSW

A number of other community surveillance programs are in operation in NSW, but did not contribute to the Influenza Report at the time of this study. These include Flutracking, a weekly online community survey14; electronic general practitioner (GP) surveillance11 and ASPREN15 sentinel GP surveillance; and FluCAN sentinel hospital surveillance.16

Methods

In 2013, influenza surveillance in NSW was evaluated. As part of this evaluation, system performance was assessed in accordance with attributes set out in the US Centers for Disease Control and Prevention (CDC) guidelines for evaluating public health surveillance systems (Box 3).17 The CDC guidelines17 are well established and have commonly been applied to assess surveillance systems.18-20

Box 3. Surveillance system attributes defined in the CDC guidelines17

Usefulness: refers to the ability of the surveillance system to contribute to prevention and control of adverse health-related events

Simplicity: considers both system structure and ease of operation

Acceptability: encompasses the willingness of people and organisations to participate in the surveillance system

Sensitivity: refers to the proportion of cases of disease detected by the system. It can also refer to the system’s ability to detect outbreaks of disease and monitor changes in case numbers over time

Positive predictive value: measures the proportion of reported cases under surveillance that actually have influenza

Data quality: refers to the completeness and validity of data, and the processes of data acquisition

Stability: refers to the reliability and availability of the system

Flexibility: refers to the ability of the system to adapt to changing needs or operating conditions with little additional time, personnel or funding

Timeliness: is considered in terms of the availability of information to inform public health responses

Representativeness: refers to the ability of the system to accurately describe the occurrence of a health-related event over time, and its distribution in the population by place and person

Data collection to evaluate the surveillance system

To assess system performance, in-depth interviews were conducted with key stakeholders who were selected because they either played an integral role in collecting and providing surveillance information, or actively used the information gathered.21 Interviews followed a topic discussion guide, which covered the attributes of the surveillance system, its strengths and weaknesses, how surveillance information was being used, and areas where the system or its outputs could be improved. Interviews were recorded and reviewed repeatedly to enable detailed notes to be taken – this method has been documented as being cost-effective and theoretically sound.22 The data were analysed for emerging themes.

System sensitivity was estimated by calculating the proportion of the total number of respiratory tests that were positive for influenza, using respiratory testing data provided by the sentinel laboratories in 2012. Laboratory-confirmed influenza notifications recorded in the Notifiable Conditions Information Management System (NCIMS) were analysed to assess aspects of timeliness, data quality and representativeness. NCIMS notifications based on serology were excluded because they are not reliable indicators of current infection.

A supplementary process of document review was undertaken to enable validation of information between sources.23 Documents included reports, guidelines, correspondence from key informants in the system, and minutes from surveillance meetings.

Ethics approval was granted by the University of New South Wales Human Research Ethics Advisory Panel G (approval number 9_12_042).

Results

A total of 21 interviews were conducted with a range of stakeholders from different agencies, comprising the NSW reference laboratories (n = 2), the World Health Organization Collaborating Centre (WHOCC) (n = 1), PHREDSS (emergency department surveillance; n = 1), a sample of staff (directors, surveillance officers and epidemiologists) from a selection of public health units in metropolitan and regional NSW (n = 10), the (then) Australian Government Department of Health and Ageing (n = 1), the National Centre for Immunisation Research & Surveillance (n = 1), the NSW Ministry of Health (n = 4), and the chief executive of a local health district (n = 1). The results are presented according to the CDC system attributes.

Usefulness

The surveillance system was said to be meeting most of its stated objectives (Box 1). However, stakeholders disagreed regarding the extent to which the system captured disease severity. Some stakeholders felt that, although PHREDSS was able to provide an indication of disease severity by capturing critical care admissions, more comprehensive severity information would be useful.

Stakeholders reported using surveillance information in a variety of ways, including in communication dispatches and media, planning and resource allocation, promoting vaccination among staff and patients, and epidemiological analysis.

Participants regarded the NSW Health Influenza Report as timely, useful and able to inform a range of public health responses, although some suggested that data from other surveillance sources (Flutracking, FluCAN) should also be included. NSW was recognised by the Australian Government as a valuable contributor to national surveillance.

Simplicity

Although the system was relatively inexpensive to operate, the need to integrate surveillance information from multiple data sources meant that the overall system was not considered simple. Individual data sources were identified by participants as having varying levels of complexity, with routine and automated systems considered the most simple.

Some of the sentinel laboratory sites did not have automated systems, and required manual extraction and reporting of data. Although this was reported by laboratory staff to be “mostly easy”, the potential for the process to become time consuming, particularly during peak season, was acknowledged to be substantial.

Acceptability

The routine provision of data by both PHREDSS and the sentinel laboratories within the required 1-week reporting period was taken as a measure of acceptability. Stakeholders indicated, however, that acceptability was likely to be dependent on the capacity and workload of those interacting with the system. Sources that were routine and automated (such as PHREDSS) were seen as the most acceptable in terms of operation. Stakeholders commented that, when their workload increased, such as during peak influenza activity, ability and willingness to undertake enhanced surveillance tasks decreased.

Stakeholder relationships played an important role in perceived acceptability. Participants reported that people and organisations were willing to participate in reporting where established relationships and processes were in place. For example, one stakeholder commented that gathering data from the sentinel laboratories was largely dependent on the availability of key informants to provide surveillance information. When key informants were not available to report data, reporting sometimes did not happen, and active follow-up was required.

Participants from laboratories commented on the role played by the scenario-based algorithm (which guides specimen referral at different stages of influenza activity)13 in increasing acceptability. This algorithm was said to streamline and reduce reporting requirements, especially at times when workload was high.

Sensitivity

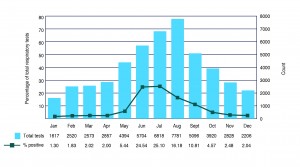

Stakeholders acknowledged that a large proportion of people with influenza do not undergo confirmatory testing, so obtaining reliable estimates of the incidence of influenza remains a challenge. Estimates of sensitivity can be made by monitoring the circulating influenza viruses, and by using a range of surveillance indicators. Assessing the diagnostic effort over time, by calculating the proportion of influenza-positive respiratory tests using testing denominator data provided by the sentinel laboratories, can also provide an indication of sensitivity. In 2012, the sensitivity of sentinel laboratory surveillance was estimated to vary from 1% in the interseasonal period to 25% at the height of the season (Figure 1). Sensitivity at the peak of the season corresponds to data from a seroprevalence survey conducted in NSW following the 2009 pandemic, which calculated the population rate of pandemic H1N1 influenza infection to be 28%.24 However, it is known that a range of factors contribute to this result, including health-seeking behaviour, clinical testing practices and the prevalence of influenza.

Figure 1. Proportion of influenza-positive respiratory tests by month, 2012 (click to enlarge)

Source: NSW sentinel laboratory surveillance, 2012

Stakeholders agreed that the virological data collected in the system were highly sensitive25 and stable over time. Further, informants in the laboratories noted that, under the new regulatory framework for in vitro diagnostic medical devices26, laboratories would be required to meet regulatory standards, which would increase the uniformity and quality of influenza tests.

Positive predictive value

Stakeholders agreed that virological data collected by the sentinel laboratories came from molecular tests that were highly specific.25 Statewide notification data included a small proportion (11%) of case reports based on serological testing, which were known to have a lower specificity for acute infections. However, there was no indication that this had a significant negative impact on influenza surveillance reporting or generated superfluous public health activity, such as outbreak investigations.

Data quality

Stakeholders reported that data quality in the key surveillance systems (sentinel laboratories, PHREDSS and mortality data) was consistently monitored, and data anomalies were actively addressed to ensure that the required data were complete. Stakeholders stated that this could be resource intensive, particularly in systems where data were manually reported, such as the sentinel laboratories.

The NCIMS was considered by stakeholders as having reasonable data quality for its purpose. However, because of issues with timeliness, it was not routinely relied upon, other than to capture notifications and derive the proportions of influenza A and B in circulation, which were reliably recorded for more than 99% of all confirmed infections.

In the sentinel laboratories, the absence of clinical information accompanying referred specimens meant that determinations about representativeness and estimates of vaccine effectiveness could not be made. Most stakeholders acknowledged that obtaining this clinical information was ideal; however, a number of practical constraints meant that this was unlikely to be achieved.

Stability

Stakeholders reported that the overall system was stable, with consistent input from several sources, including the sentinel laboratories, PHREDSS and the WHOCC. PHREDSS was identified as a key strength in terms of regular and automated provision of information. Stakeholders raised questions about the system’s stability should demand increase significantly. Of concern were the data from the sentinel laboratories, particularly given that data acquisition relied on established relationships with informants.

Flexibility

Stakeholders reported that the surveillance system was robust and a sound platform from which to develop a pandemic response, although the degree of flexibility would be resource dependent. They suggested that consideration should be given to capacity of individual systems to manage increased demand. For example, enhanced surveillance was noted to be resource dependent, which was of concern for both the public health units and the laboratories. Participants commented that, if this situation continued, additional staffing would be necessary. Stakeholders within the laboratories indicated that managing the increased workload during winter was already a challenge, and that the system had struggled to meet demand during the 2009 pandemic.

Stakeholders noted that some data sources, such as PHREDSS, had been designed with flexibility in mind. PHREDSS had been successfully and promptly adapted to enable monitoring of critical care admissions in response to needs arising early in the 2009 pandemic (before establishment of pandemic intensive care unit surveillance through the Australian and New Zealand Intensive Care Research Centre).27 However, although PHREDSS could be responsive, participants commented that any changes would involve additional work. The laboratories and the WHOCC both reported flexibilities that would enable a timely response to interesting, unusual or important cases – this would involve reporting priority cases directly to laboratory staff.

Timeliness

Timeliness varied across sources, but the overall system yielded timely information for its purpose. Reporting from the sentinel laboratories and the real-time monitoring offered by PHREDSS were both considered by participants as being timely, with both sources providing data at least weekly, as required. An examination of notification data in 2012 revealed that the average time between receiving a notification and entering it into the NCIMS was 1.7 days (range 0–141 days); the large range meant that the notification data were not considered to be reliably timely by stakeholders. Therefore, these data were not routinely included in the Influenza Report.

Stakeholders stated that assessments of timeliness varied, depending on the use and purpose of information. For example, reporting from the WHOCC on strain characterisations, antiviral resistance and vaccine matching was not considered timely for clinical purposes, but was timely for informing public health action. The WHOCC was also noted to be able to respond in a timely way when needed.

Representativeness

Participants commented that the representativeness of surveillance information was adequate for its purpose, despite its being only an indication of influenza infections in the community.

Information from the sentinel laboratories was said to be reasonably representative. In 2012, the sentinel laboratories represented 79% of all influenza notifications in NSW.

PHREDSS covered 59 hospitals in NSW – about 85% of all public hospital emergency departments – and participants considered this a reasonable geographic representation of the state. PHREDSS was also thought to give a good indication of influenza in the community, as presentations to NSW emergency departments for ILI include patients with mild infections as well as those with more severe illness. Some stakeholders commented that hospital representation in southern NSW was lacking, with only one PHREDSS hospital in that region.

Discussion

The evaluation revealed the NSW influenza surveillance system as a complex network of discrete data sources. The overall system was found to perform well against the majority of the CDC surveillance system attributes and produced useful information that informed a number of public health activities. The key challenge for the system at the time of this study was in managing complexity. Although the diversity of surveillance sources was comprehensive, the integration of data to produce the Influenza Report was resource intensive, particularly where sources used manual data collection. The system was also observed to be heavily reliant on individuals who knew the system, its sources and informants well, and was thus subject to key person risk. For example, the process of collating data from the sentinel laboratories was dependent on established relationships with informants in the laboratories, and their availability and willingness to provide data. This was noted to be one of the more vulnerable aspects of the system, which would benefit from reinforcement to ensure stability of the system.

Another challenge was that some sources that provide critical input into the system operate in jurisdictions outside the NSW Health system. Since this is unlikely to change, the management of cross-jurisdictional issues will remain an ongoing challenge. For example, such ideals as improving the timeliness and communication of information from the WHOCC in Victoria will be subject to prioritisation of WHOCC activities; NSW Health is only one of multiple WHOCC stakeholders.

Given that the true incidence of influenza in the population is unknown, understanding sensitivity remains an ongoing challenge. That said, the use of respiratory testing denominator data allows more accurate interpretation of trends, including whether changes in case numbers are due to disease activity or changes in health-seeking behaviours and subsequent testing practices.

Stakeholders commented that system responsiveness would depend on additional resourcing. Strengthening the system to increase its flexibility would ensure that contingencies were in place to respond adequately to increased demand. Although Flutracking was not formally assessed, some stakeholders regarded it as a flexible system that would not depend heavily on additional resources.

Efforts to automate data collection systems, such as the implementation of electronic laboratory reporting (enabling automatic uploading of laboratory notifications to the NCIMS), were already in the pipeline. These measures should help improve the stability, timeliness, quality and overall usefulness of data, as well as increase the acceptability associated with data provision and collation. Steps to formalise the processes around data acquisition would also be of benefit, reducing the susceptibility of the system to key person risk, and increasing the reliability and stability of the system.

In response to findings from this study, the NSW Health Influenza Report now includes data from a wider range of surveillance sources. This includes FluCAN; however, given that only three NSW hospitals (Westmead Hospital, John Hunter Hospital and the Children’s Hospital at Westmead) are involved in FluCAN, more comprehensive severity information might improve the utility of surveillance information for some stakeholders. By the same token, expanding existing community-level sources (such as electronic GP surveillance) would improve surveillance of less severe disease, provide important epidemiological information on community infections and increase capacity for early detection.

This study has a number of limitations. Firstly, the scope of the study did not include a number of the related surveillance systems that provide information that is now included in the Influenza Report (Flutracking and FluCAN). Formal evaluations of these systems are currently under way. Secondly, although the evaluation sought to include a representative sample of stakeholders, the small sample size means that the full spectrum of opinions has not been captured. Finally, while the potentially subjective nature of qualitative methods is recognised, this risk was addressed through triangulation with document analysis, and comparisons across data and researchers.28 A consultative process around data interpretation was therefore undertaken to alleviate potential bias in the analysis.

Conclusion

This study identified a robust influenza surveillance system that performed well against the majority of CDC surveillance system attributes. The system was found to provide high-quality and timely data. However, a number of challenges were documented, including system complexity and cross-jurisdictional issues. Although the system would benefit from strengthening in some specific areas, the study highlights a number of constraints around the system as a whole, which are ongoing challenges to the realisation of some improvements.

Acknowledgements

GD completed this work while she was a trainee on the NSW Public Health Training Program, funded by the NSW Ministry of Health. This work was undertaken on placement in the Communicable Diseases Branch of Health Protection NSW.

Copyright:

© 2016 Dawson et al. This article is licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Licence, which allows others to redistribute, adapt and share this work non-commercially provided they attribute the work and any adapted version of it is distributed under the same Creative Commons licence terms.

References

- 1. Charles J, Harrison C, Britt H. Influenza. Aust Fam Physician. 2008;37(10):793. PubMed

- 2. Turner D, Wailoo A, Nicholson K, Cooper N, Sutton A, Abrams K. Systematic review and economic decision modelling for the prevention and treatment of influenza A and B. Health Technol Assess. 2003;7(35):iii–iv, xi–xiii, 1–170. CrossRef | PubMed

- 3. Nicholson KG, Wood JM, Zambon M. Influenza. Lancet. 2003;362(9397):1733–45. CrossRef | PubMed

- 4. Cox NJ, Subbarao K. Influenza. Lancet. 1999;354(9186):1277–82. CrossRef | PubMed

- 5. World Health Organisation. Global epidemiological standards for influenza. Geneva: WHO; 2013 [cited 2016 Feb 19]. Available from: www.who.int/influenza/resources/documents/WHO_Epidemiological_Influenza_Surveillance_Standards_2014.pdf?ua=1

- 6. NSW Health. Influenza: Control guideline for public health units. Sydney: NSW Government; 2014 [cited 2013 Apr 10]. Available from: www.health.nsw.gov.au/Infectious/controlguideline/Pages/influenza.aspx

- 7. Reed C, Chaves SS, Daily Kirley P, Emerson R, Aragon D, Hancock EB, et al. Estimating influenza disease burden from population-based surveillance data in the United States. PLoS One. 2015;10(3):e0118369. CrossRef | PubMed

- 8. Schindeler SK, Muscatello DJ, Ferson MJ, Rogers KD, Grant P, Churches T. Evaluation of alternative respiratory syndromes for specific syndromic surveillance of influenza and respiratory syncytial virus: a time series analysis. BMC Infect Dis. 2009;9:190. CrossRef | PubMed

- 9. NSW Health. Influenza surveillance reports. Sydney: NSW Government; 2016 [cited 2016 Feb 19]. Available from: www.health.nsw.gov.au/Infectious/Influenza/Pages/reports.aspx

- 10. NSW Health. Public Health Real-time Emergency Department Surveillance System (PHREDSS) Public Health Unit Response. Sydney: NSW Government; 2010 [cited 2016 Feb 19]. Available from: www0.health.nsw.gov.au/policies/gl/2010/pdf/GL2010_009.pdf

- 11. Liljeqvist GTH, Staff M, Puech M, Blom H, Torvaldsen S. Automated data extraction from general practice records in an Australian setting: trends in influenza-like illness in sentinel general practices and emergency departments. BMC Public Health. 2011;11:435. CrossRef | PubMed

- 12. Muscatello DJ, Morton PM, Evans I, Gilmour R. Prospective surveillance of excess mortality due to influenza in New South Wales: feasibility and statistical approach. Commun Dis Intell Q Rep. 2008;32(4):435–42. PubMed

- 13. Communicable Diseases Branch. Referral for supplementary testing to ICPMR of SEALS. Sydney: Health Protection NSW; 2012 [Internal document].

- 14. Dalton CB, Carlson SJ, Butler MT, Feisa J, Elvidge E, Durrheim DN. Flutracking weekly online community survey of influenza-like illness annual report, 2010. Commun Dis Intell Q Rep. 2011;35(4):288-93. PubMed

- 15. Parrella A, Dalton CB, Pearce R, Litt JC, Stocks N. ASPREN Surveillance System for Influenza-like Illness – a Comparison with Flutracking and the National Notifiable Diseases Surveillance System. Aust Fam Physician. 2009;38(11):932–6. PubMed

- 16. Kelly PM, Kotsimbos T, Reynolds A, Wood-Baker R, Hancox B, Brown SG, et al. FluCAN 2009: initial results from sentinel surveillance for adult influenza and pneumonia in eight Australian hospitals. Med J Aust. 2011;194(4):169–74. PubMed

- 17. German RR, Lee LM, Horan JM, Milstein RL, Pertowski CA, Waller MN, Guidelines Working Group Centers for Disease Control and Prevention. Updated guidelines for evaluating public health surveillance systems: recommendations from the Guidelines Working Group. 2001;50(RR–13):1–35.

- 18. Guy RJ, Kong F, Goller J, Franklin N, Bergeri I, Dimech W, et al. A new national chlamydia sentinel surveillance system in Australia: evaluation of the first stage of implementation. Commun Dis Intell Quarterly Rep. 2010;34(3):319–28. PubMed

- 19. He S, Zurynski YA, Elliott EJ. Evaluation of a national resource to identify and study rare diseases: the Australian Paediatric Surveillance Unit. J Paediatr Child Health. 2009;45(9):498–504. CrossRef | PubMed

- 20. Halliday LE, Peek MJ, Ellwood DA, Homer C, Knight M, McLintock C, et al. The Australasian Maternity Outcomes Surveillance System: an evaluation of stakeholder engagement, usefulness, simplicity, acceptability, data quality and stability. Aust N Z J Obstet Gynaecol. 2013;53(2):152–7. CrossRef | PubMed

- 21. Miles MB, Huberman AM. Qualitative data analysis: an expanded sourcebook. Thousand Oaks, CA: Sage Publications; 1994.

- 22. Halcomb EJ, Davidson PM. Is verbatim transcription of interview data always necessary? Appl Nurs Res 2006;19(1):38–42. CrossRef | PubMed

- 23. Bowen GA. Document analysis as a qualitative research method. Qualitative Research Journal. 2009;9(2):27–40. CrossRef

- 24. Gilbert GL, Cretikos MA, Hueston L, Doukas G, O'Toole B, Dwyer DE. Influenza A (H1N1) 2009 antibodies in residents of New South Wales, Australia, after the first pandemic wave in the 2009 southern hemisphere winter. PLoS One. 2010;5(9):e12562. CrossRef | PubMed

- 25. Ellis J, Iturriza M, Allen R, Bermingham A, Brown K, Gray J, Brown D. Evaluation of four real-time PCR assays for detection of influenza A(H1N1)v viruses. Euro Surveill. 2009;14(22). PubMed

- 26. Therapeutic Goods Administration. The regulatory requirements for in-house IVDs in Australia. Canberra: Australian Government Department of Health; 2012 [cited 2016 Feb 19]. Available from: www.tga.gov.au/publication/regulatory-requirements-house-ivds-australia

- 27. NSW Health. Report on intensive care unit surveillance systems operational during the pandemic (H1N1) 2009 influenza response. Sydney: NSW Government; 2010 [cited 2016 Feb 19]. Available from: www.health.nsw.gov.au/pandemic/Documents/icu_pandemic_surveillance_in_2009.pdf

- 28. Mays N, Pope C. Assessing quality in qualitative research. BMJ. 2000;320(7226):50–2. CrossRef | PubMed