Abstract

Objectives and importance of study: Evaluating impacts of quality improvement activities across diverse clinical focus areas is challenging. However, evaluation is necessary to determine if the activities had an impact on quality of care and resulted in system-wide change. Clinical networks of health providers aim to provide a platform for accelerating quality improvement activities and adopting evidence based practices. However, most networks do not collect primary data that would enable evaluation of impact. We adapted an established expert panel approach to measure the impacts of efforts in 19 clinical networks to improve care and promote health system change, to determine whether these efforts achieved their purpose.

Study type: A retrospective cross-sectional study of 19 clinical networks using multiple methods of data collection including the EXpert PANel Decision (EXPAND) method.

Methods: Network impacts were identified through interviews with network managers (n = 19) and co-chairs (n = 32), and document review. The EXPAND method brought together five independent experts who provided initial individual ratings of overall network impact. After attendance at an in-person moderated meeting where aggregate scores were discussed, the experts provided a final rating. Median scores of postmeeting ratings were the final measures of network impact.

Results: Among the 19 clinical networks, experts rated 47% (n = 9) as having a limited impact on improving quality of care, 37% (n = 7) as having a moderate impact and 16% (n = 3) as having a high impact. The experts rated 26% (n = 5) of clinical networks as having a limited impact on facilitating system-wide change, 37% (n = 7) as having a moderate impact and 37% (n = 7) as having a high impact.

Conclusion: The EXPAND method enabled appraisal of diverse clinical networks in the absence of primary data that could directly evaluate network impacts. The EXPAND method can be applied to assess the impact of quality improvement initiatives across diverse clinical areas to inform healthcare planning, delivery and performance. Further research is needed to assess its reliability and validity.

Full text

Introduction

Policy makers and health service providers need data to inform their decisions about investment in effective quality improvement, but they lack systematic approaches to evaluating the impacts of different quality improvement activities. Clinical networks provide a structure for connecting professionals across institutions and clinical specialties to implement quality improvement activities.1,2 However, there are few studies that assess networks’ effectiveness in implementing evidence based care. One of the reasons for the small number of studies is the lack of a measurement tool to assess the networks’ impact on improving quality of care and implementing system-wide change. This is particularly so for those networks operating in large health systems that span multiple clinical disciplines within a geographical region.

Generally, it is not possible to select one indicator of success (or outcome) that is common between networks in diverse clinical areas. For example, disease-free survival, readmission rates or mortality rates will be more applicable as indicators for some clinical networks than for others. Similarly, the selection of different patient care indicators for each network (e.g. time to thrombolysis for a stroke network or HbA1 testing for an endocrinology network) will not control for potential bias resulting from selecting outcomes that are more or less difficult to alter, or more or less clinically significant, in different networks. In this paper, we detail a novel method to assess impacts across networks in diverse clinical areas, to support evaluations and future investment decisions.

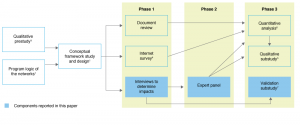

This paper reports the impact of quality improvement activities undertaken by 19 clinical networks in New South Wales (NSW), Australia. These networks were involved in a retrospective cross-sectional study (Figure 1) designed to investigate the external support, organisational and program factors associated with successful clinical networks.1,3-6 NSW clinical networks have similarities to clinical networks that operate in the UK, other parts of Europe and the US in that they are linked, voluntary groups of health professionals working in a coordinated manner to support provision of high-quality and effective clinical services. These state-funded clinical networks have a system-wide focus; clinicians identify and advocate for models of service delivery (e.g. outreach services, new equipment) and quality improvement initiatives (e.g. models of care, training and education). Clinical networks facilitate implementation of agreed-on changes in collaboration with healthcare funders and providers.

Figure 1. Study overview with data collection and analysis components reported in this paper highlighted (click to enlarge)

To measure the overall impact of NSW clinical networks, we adapted an expert consensus approach. The use of expert panels provides a structured means of interpreting available information and reaching a consensus about issues where there is limited or variable evidence.8,9 The potential value of the method is its focus on implicit measurement of impacts (in terms of magnitude and importance) across diverse clinical focus areas through expert judgment in lieu of an explicit gold standard (such as comparative chart-based or cost–benefit measures). More broadly, this provides evidence about whether clinical networks are successful or not and can be used to inform the future design of clinical networks.

The two aims of this paper are to:

1. Describe the EXPAND method to enable future replication and/or adaptation

2. Report the impact of clinical networks on improving quality of care and/or facilitating system-wide change, assessed using the EXPAND method.

Methods

Sample of networks and quality improvement activities

Clinical networks in operation in NSW, Australia, during a 3-year study period (2006–2008) were eligible for recruitment to the study.1 All network managers (n = 19) and network co-chairs (n = 32) who worked in or chaired a clinical network during the study period were invited to be interviewed. Interviews aimed to identify the impacts of network quality improvement activities on improving quality of care and/or facilitating system-wide change, and how and why they occurred. As measured in a retrospective document review study, 312 quality improvement activities (such as education for implementation into practice, consumer resources, and development of systems, processes or models of care), undertaken between 2006 and 2008, were included in the assessment of impacts.10

Design

A retrospective cross-sectional study of 19 clinical networks using multiple methods of data collection including the EXPAND method (see Figure 1, and STROBE checklist in Supplementary File 1, available from: ses.library.usyd.edu.au/handle/2123/17773 ).

Data sources – identification of impacts

We assessed impacts resulting from network activities during the study period up to the end of 2011 to give sufficient time for changes to have occurred (i.e. between 3 and 5 years after the quality improvement activities were implemented). Evidence of impact on improving quality of care was defined as clinical networks having improved the safety, effectiveness, appropriateness, accessibility, efficiency or patient-centred nature of care.11 Such improvements were illustrated with direct evidence of changes in clinical practice or by using patient-level data to show a change. Evidence of impact on facilitating system-wide change was defined as clinical network activities being adopted on a larger scale and making improvements to the wider health system. Such implementation of change could be on a moderate scale or across an entire system.

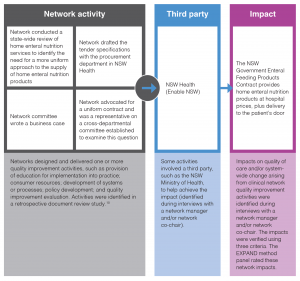

Impacts identified by network managers and co-chairs in interviews were verified using three criteria: 1) the impact met the definitions of improving quality of care and/or facilitating system-wide change; 2) the network was largely responsible for the impact occurring; and 3) there was independent documentary evidence of the impact. Documentary evidence could be in the form of evaluation reports, funding agreements, utilisation rates for new clinical services, or similar. Quality assurance measures, such as use of a coding manual and coding review by a second team member, were undertaken. A validation substudy was conducted to verify whether the impacts identified in the interviews were attributable to network actions, and results demonstrated that the networks provided accurate information.7 The link between quality improvement activities and impacts on quality of care and system-wide change, and how this relates to the EXPAND method, are described in Figure 2.

Figure 2. Template and example of the visual representation of an impact resulting from network activities (click to enlarge)

Procedure – the EXpert PANel Decision (EXPAND) method to assess impact

The RAND/UCLA appropriateness method has been widely used to derive expert consensus on clinical indications.12 It combines expert opinion with a systematic review of scientific evidence to produce indications of appropriateness that can be used for review criteria, practice guidelines or both. Researchers at the US Veterans Health Administration (VA) have led international efforts to adapt the appropriateness method to decision making within a national healthcare system.13 The VA method uses a consensus process informed by literature, data and expert opinion. The method has been applied to assess the appropriateness of quality improvement activities for improving health or quality of care.13,14 We adapted these established expert consensus approaches to design the EXpert PANel Decision (EXPAND) method.

A network impact dossier was compiled for the 19 clinical networks including a visual representation of every impact (Figure 2), a summary of the context and independent documentary evidence that the impact had been realised.

The network impact dossiers were assessed by EXPAND method panel members (Figure 3). The five panel members were independent of the clinical networks and were chosen for their ability to judge impacts of diverse activities in a range of clinical areas. The panel members had extensive senior management experience in implementing and evaluating innovation and system-wide change. None of the panel members were practising clinicians at the time of rating the networks. Four of the panel members were from two Australian states other than NSW and one was from the US. A chair (EMY), with expertise in expert panel methodology, led a moderated meeting of the EXPAND method panel.

Figure 3. EXPAND method (click to enlarge)

The EXPAND method panel members made relative judgements about the impact of the 19 networks’ quality improvement activities, based on the evidence in the network impact dossiers. Panel members independently rated each network (premeeting ratings) on its impact on improving quality of care and its impact on facilitating system-wide change using a nine-point scale: scores 1–3 indicated limited impact, scores 4–6 indicated moderate impact and scores 7–9 indicated high impact. Following the initial rating, a moderated face-to-face meeting of the EXPAND method panel was conducted in April 2012, during which aggregated premeeting ratings were presented. At the meeting, the panel members discussed any discrepancies in their ratings, the quality of the evidence of impacts provided in the network impact dossiers, and the reasoning each member used to arrive at their ratings. At the end of the meeting, each panel member independently rerated each network (postmeeting ratings).

Analysis

The median score from the EXPAND method panel members’ postmeeting ratings was used as the final measure of the networks’ impact on improving quality of care and facilitating system-wide change. We assessed disagreement in ratings among members using the Interpercentile Range Adjusted for Symmetry (IPRAS), which is a measure of dispersion recommended by the RAND/UCLA appropriateness method (33rd and 67th percentiles, equating to first and third tertiles as the criteria). Intraclass correlation coefficients (ICC) were calculated to measure the level of agreement between EXPAND method panel members’ rating scores of network impacts before (premeeting ratings) and after the moderated meeting (postmeeting ratings).

Ethics approval

The study was approved by the University of Sydney Human Research Ethics Committee in August 2011 (ID: 13988).

Results

Impacts arising from quality improvement activities undertaken by the clinical networks

At least one representative (network manager and/or network co-chair) from each of the 19 clinical networks consented to an interview to identify impacts resulting from network quality improvement activities. This resulted in the identification of 51 impacts on improving quality of care and/or facilitating system-wide change that met the three verification criteria (see Supplementary File 2 for impact examples, available from: ses.library.usyd.edu.au/handle/2123/17773). The number of impacts achieved by the clinical networks ranged from 0 to 8, with an average of 2.68 impacts per network, a median of 2 impacts and a mode of 4 impacts. New clinical practice guidelines and new service establishment were the most common types of impacts (Figure 4). Seventeen of the 19 clinical networks made an impact on improving quality of care and/or facilitating system-wide change. Two networks did not have any impacts that met the eligibility criteria; they were scored as 0 and included in the limited impact category.

Figure 4. Number and types of impacts that arose from clinical network quality improvement activitiesa

EXPAND method panel member ratings

The EXPAND method panel members rated 47% (n = 9) of clinical networks as having a limited impact on improving quality of care, 37% (n = 7) as having a moderate impact and 16% (n = 3) as having a high impact. The panel members rated 26% (n = 5) of clinical networks as having a limited impact on facilitating system-wide change, 37% (n = 7) as having a moderate impact and 37% (n = 7) as having a high impact. Members’ ratings for the impact measures in all clinical networks were consistent (based on the RAND/UCLA IPRAS criteria).

The level of agreement between members’ rating scores of network impacts before and after the moderated meeting improved for both impact on quality of care (ICC 0.36 premeeting and ICC 0.84 postmeeting) and impact on facilitating system-wide change (ICC 0.40 premeeting and ICC 0.89 postmeeting). A high level of agreement between member ratings was achieved only after the moderated meeting.

Discussion

This study demonstrates that diverse impacts of different quality improvement activities across a range of clinical focus areas can be assessed using an expert consensus approach. Despite challenges relating to a lack of common indicators of patient care, difficulty in making comparisons across diverse clinical disciplines and the wide range of impacts achieved by the clinical networks, the EXPAND method provided a structure for synthesising and discussing rating scores for impacts on improving quality of care and facilitating system-wide change.

The key strength of this study was the novel application of accepted systematic consensus methods13,15 to the assessment of the impact of diverse quality improvement activities initiated by a broad range of networks. When consensus methods are used for clinical applications, panel members who are more familiar with procedures under review are more likely to rate them more positively.16,17 This highlights the importance of rigour in selecting an experienced, independent, multidisciplinary panel of experts. The EXPAND method panel members did not have specific knowledge about the clinical networks in the study and had no conflicts of interest with the networks. Therefore, the selection of our experts was a strength of the study.

A potential limitation of the study was that clinical network impacts were self-reported by network managers and network co-chairs. To reduce response bias, documentary evidence was required for each clinical network impact; the validation substudy demonstrated that the self-reported network impacts were accurate and that the networks played an important role in achieving the impacts.6,7

However, the quality of the documentary evidence of network impact was highly variable across clinical networks and is a limitation of this study. This may be explained in part because we sought retrospective documentation from clinical networks. Therefore, our measures of impact on improving quality of care and facilitating system-wide change were restricted to available evidence at the time of the study; clinical network impact may be underreported as a result. During the study period, clinical networks did not have an organisational requirement to collect rigorous evaluation data. Future research on quality improvement activities will be improved if evaluation data collection is mandated as part of quality improvement activities. Another limitation is that we were unable to conduct test–retest reliability of the EXPAND method panel members’ ratings with the same experts or different experts (interpanel reliability) because of resource constraints. Further, validity could not be assessed. This study represents the first attempt at using the EXPAND method. Future research will need to assess the reliability and predictive validity of this approach.

Quality improvement initiatives may include a range of interventions and address issues at the level of service structures and processes, or at the level of patient care received.18-22 Because quality improvement can take so many different forms, there is an inherent challenge in trying to assess and evaluate the quality of the design of initiatives in any meaningful way.20 The EXPAND method contributes to existing literature on the measurement of quality improvement, providing a new method that is applicable across heterogeneous initiatives that lack gold standard evidence. Over time, more indicators of impact could be added to the outcomes that were assessed in this study, such as structure (e.g. capacity for providing services) or process (e.g. how well a service was delivered)23, and measures such as patient experience and patient-reported outcomes could be added. Future studies could also use the rating scale that emerged through expert panel discussion: scores 1–3 indicated limited impact, scores 4–6 indicated moderate impact, and scores 7–9 indicated high impact.

We adapted established expert consensus methodologies to design the EXPAND method. Other adaptations, such as the SEaRCH expert panel process, which focus on assessing clinical appropriateness and setting research agendas, have been designed to be cost-effective, efficient and transparent.24 The EXPAND method complements the SEaRCH approach through its focus on assessing the impact of quality improvement initiatives. Over time, operational features of the SEaRCH approach could be added to the EXPAND method, such as the use of technology to enter scores, and teleconferences for meetings.

The clinical networks studied were found to improve quality of care and facilitate system-wide change but there was heterogeneity in the magnitude and types of impacts that arose. The key insight arising from the main study for which the EXPAND method was developed (Figure 1) is that the clinical networks with the greatest impact were those that had a defined and commonly held purpose; a strategy to address priorities and evaluate impact; and patients, community groups, health services and hospital management who were inclusively involved in program development and rollout. These results have been fed back to the agency responsible for commissioning and supporting NSW clinical networks.

Future studies using the EXPAND method should test the reliability and validity of the method. Opportunities to test the EXPAND method in studies where a gold standard measure of impact is available, such as hospital mortality rates, will improve its usefulness for evaluating clinical networks, quality improvement activities more generally, and other areas of research where evidence is variable or limited.

Conclusion

The study demonstrates that, although there are challenges in conceptualising and operationalising the measurement of quality improvement activities, the EXPAND method can be used to evaluate the impact of clinical networks in diverse clinical focus areas to inform healthcare planning, delivery and performance. Further research is needed to assess its reliability and validity.

Acknowledgements

The authors wish to acknowledge the contribution of the clinical network managers and co-chairs and the Agency for Clinical Innovation executive for participating in this research. The authors are grateful for the contributions of the Clinical Network Research Group, which provided an ongoing critique and intellectual contributions through its involvement in the study.

This research was funded by the National Health and Medical Research Council of Australia (NHMRC) through its partnership project grant scheme (Grant ID: 571447). The Agency for Clinical Innovation also provided funds to support this research as part of the NHMRC partnership project grant scheme.

Peer review and provenance

Externally peer reviewed, not commissioned.

Copyright:

© 2018 Dominello et al. This article is licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Licence, which allows others to redistribute, adapt and share this work non-commercially provided they attribute the work and any adapted version of it is distributed under the same Creative Commons licence terms.

References

- 1. Haines M, Brown B, Craig J, D'Este C, Elliott E, Klineberg E, et al. Determinants of successful clinical networks: the conceptual framework and study protocol. Implement Sci. 2012;7:16. CrossRef | PubMed

- 2. Brown B, Patel C, McInnes E, Mays N, Young J, Haines M. The effectiveness of clinical networks in improving quality of care and patient outcomes: a systematic review of quantitative and qualitative studies. BMC Health Serv Res. 2016;8(16):360. CrossRef | PubMed

- 3. McInnes E, Middleton S, Gardner G, Haines M, Haertsch M, Paul CL, et al. A qualitative study of stakeholder views of the conditions for and outcomes of successful clinical networks. BMC Health Serv Res. 2012;12:49. CrossRef | PubMed

- 4. Brown B, Haines M, Middleton S, Paul C, D'Este C, Klineberg E, et al. Development and validation of a survey to measure features of clinical networks. BMC Health Serv Res. 2016;16(1):531. CrossRef | PubMed

- 5. McInnes E, Haines M, Dominello A, Kalucy D, Jammali-Blasi A, Middleton S, et al. What are the reasons for clinical network success? A qualitative study. BMC Health Serv Res. 2015;15:497. CrossRef | PubMed

- 6. Haines MM, Brown B, D’Este CA, Yano EM, Craig CJ, Middleton S, et al, on behalf of the Clinical Networks Research Group. Improving the quality of healthcare: a cross-sectional study of the features of successful clinical networks. Public Health Res Pract. 2018;28(4):e28011803. CrossRef

- 7. Kalucy D. Determinants of effective clinical networks: validation sub-study [Masters thesis]. Sydney: University of New South Wales; 2013.

- 8. Campbell SM, Braspenning J, Hutchinson A, Marshall MN. Research methods used in developing and applying quality indicators in primary care. BMJ. 2003;326(7393):816–9. CrossRef | PubMed

- 9. Milat AJ, Laws R, King L, Newson R, Rychetnik L, Rissel C, et al. Policy and practice impacts of applied research: a case study analysis of the New South Wales Health Promotion Demonstration Research Grants Scheme 2000–2006. Health Res Policy Syst. 2013;11:5. CrossRef | PubMed

- 10. The Sax Institute. What have the clinical networks achieved and who has been involved? 2006–2008. Sydney: NSW Agency for Clinical Innovation; 2011.

- 11. Institute of Medicine Committee on Quality of Health Care in America. Crossing the quality chasm: a new health system for the 21st Century. Washington (DC): National Academies Press (US). 2001.

- 12. Shekelle P. The appropriateness method. Medical Decis Making. 2004;24(2):228–31. CrossRef | PubMed

- 13. Rubenstein LV, Fink A, Yano EM, Simon B, Chernof B, Robbins AS. Increasing the impact of quality improvement on health: an expert panel method for setting institutional priorities. Joint Comm J Qual Im. 1995;21(8):420–32. CrossRef | PubMed

- 14. Gagliardi AR, Simunovic M, Langer B, Stern H, Brown AD. Development of quality indicators for colorectal cancer surgery, using a 3-step modified Delphi approach. Can J Surg. 2005;48(6):441–52. PubMed

- 15. Fink A, Kosecoff J, Chassin M, Brook RH. Consensus methods: characteristics and guidelines for use. Am J Public Health. 1984;74(9):979–83. CrossRef | PubMed

- 16. Campbell SM, Hann M, Roland MO, Quayle JA, Shekelle PG. The effect of panel membership and feedback on ratings in a two-round Delphi survey: results of a randomized controlled trial. Med Care. 1999;37(9):964–8. CrossRef | PubMed

- 17. Coulter I, Adams A, Shekelle P. Impact of varying panel membership on ratings of appropriateness in consensus panels: a comparison of a multi- and single disciplinary panel. Health Serv Res. 1995;30(4):577–91. PubMed

- 18. Ovretveit J, Gustafson D. Evaluation of quality improvement programmes. Qual Saf Health Care. 2002;11(3):270–5. CrossRef | PubMed

- 19. Speroff T, James BC, Nelson EC, Headrick LA, Brommels M. Guidelines for appraisal and publication of PDSA quality improvement. Qual Manag Health Care. 2004;13(1):33–9. CrossRef | PubMed

- 20. Rubenstein LV, Hempel S, Farmer MM, Asch SM, Yano EM, Dougherty D, et al. Finding order in heterogeneity: types of quality-improvement intervention publications. Qual Saf Health Care. 2008;17(6):403–8. CrossRef | PubMed

- 21. Danz MS, Rubenstein LV, Hempel S, Foy R, Suttorp M, Farmer MM, et al. Identifying quality improvement intervention evaluations: is consensus achievable? Qual Saf Health Care. 2010;19(4):279–83. CrossRef | PubMed

- 22. Brook RH, McGlynn EA, Shekelle PG. Defining and measuring quality of care: a perspective from US researchers. Int J Qual Health Care. 2000;12(4):281–95. CrossRef | PubMed

- 23. Hung KY, Jerng JS. Time to have a paradigm shift in health care quality measurement. J Formos Med Assoc. 2014;113(10):673–9. CrossRef | PubMed

- 24. Coulter I, Elfenbaum P, Jain S, Jonas W. SEaRCH expert panel process: streamlining the link between evidence and practice. BMC Res Notes. 2016;9:16. CrossRef | PubMed